Code Review Culture: What Makes a Review Actually Useful

After reviewing approximately 3,000 pull requests over the past six years, I am convinced that most code reviews are performative. The reviewer skims the diff, leaves a comment about a variable name, approves, and moves on. The author addresses the naming nit, merges, and ships a bug that the review should have caught. The process consumed 30 minutes of two engineers’ time and produced zero value. This post is about building a code review culture where reviews consistently catch real problems and improve the codebase, based on what we have learned at Harbor Software.

The Three Things a Review Should Catch

A useful code review catches problems in three categories, ranked by severity:

1. Correctness bugs. Does the code do what it claims to do? Does it handle edge cases? Does it match the requirements? This is the highest-value contribution a reviewer can make, and paradoxically, it is the one most reviews skip. Reviewers default to style comments because style is easy to evaluate (“this variable name is unclear”) while correctness requires understanding the problem domain (“this discount calculation does not handle the case where a user’s tier changes mid-transaction”). Catching a correctness bug in review saves orders of magnitude more time than catching it in production, where it requires incident response, customer communication, and a hotfix deployment.

2. Design problems. Is the code structured in a way that will be maintainable? Are abstractions at the right level? Is there duplication that should be extracted? Will this change make the next change harder or easier? Design feedback is more subjective than correctness feedback, but it is where experienced engineers provide the most leverage. A design problem that is easy to fix now becomes extremely expensive to fix after six months of code is built on top of it. The classic example is a function that does too many things: it is easy to split now but requires a major refactoring later when ten other functions depend on its current interface.

3. Style and convention. Does the code follow the team’s conventions? Are names consistent with existing patterns? Is the formatting standard? This is the lowest-value category because it can and should be automated. If your review comments are predominantly about style, you need better linting, not better reviews.

Our internal guideline is: at least 60% of review comments should be about correctness or design. If more than 40% of comments are about style, your tooling is failing you. We track this metric monthly by categorizing a random sample of 50 review comments.

How to Review for Correctness

Reviewing for correctness requires more effort than scanning a diff. Here is the process our most effective reviewers follow:

Step 1: Read the PR description first. Understand what the change is supposed to do before you look at the code. If the PR description is missing or says “fixed stuff,” send it back. A PR without a description is not ready for review. Our PR template requires: what changed, why it changed, how to test it, and any risks or tradeoffs. The description gives the reviewer a mental model to compare the code against. Without it, the reviewer is reading code without knowing what it should accomplish, which makes it nearly impossible to catch correctness bugs.

Step 2: Look at the test changes first. Before reading the implementation, read the new and modified tests. The tests tell you what the author thinks the code should do. If the tests are insufficient (missing edge cases, no error path tests, happy path only), the most valuable review feedback is about what tests are missing, not about the implementation. We have found that reviewing tests first catches more bugs than reviewing implementation first, because it forces you to think about behavior (“what should happen when…”) rather than implementation (“is this code correct”).

Step 3: Trace the data flow. For any change that touches data (which is most changes), trace the data from input to output. Where does the data enter the system? What transformations are applied? Where is it stored? Where is it read back? Look for: missing validation at entry points, type conversions that lose precision (integer to float, date to string), error handling that swallows failures, concurrent access without synchronization, and missing tenant filtering on multi-tenant queries.

Step 4: Check the boundaries. For any conditional logic, ask: what happens at the boundary? If the code checks if (count > 10), what happens when count is exactly 10? If the code filters an array, what happens when the array is empty? If the code calls an external service, what happens when the service returns an error, a timeout, or an unexpected response format? Boundary conditions are where the majority of production bugs live, and they are the conditions most frequently omitted from tests.

Step 5: Consider the failure modes. What is the worst thing that can happen if this code has a bug? If the answer is “a customer sees the wrong data” or “we charge someone twice,” the review standard should be higher than if the answer is “a non-critical UI element is misaligned.” Not all code deserves the same level of scrutiny. Reviewing a payment processing change with the same intensity as a copy edit is a misallocation of engineering time. We label PRs as “critical” (payment, auth, data migration), “standard” (feature work), or “low-risk” (documentation, test-only, config changes) and set review expectations accordingly.

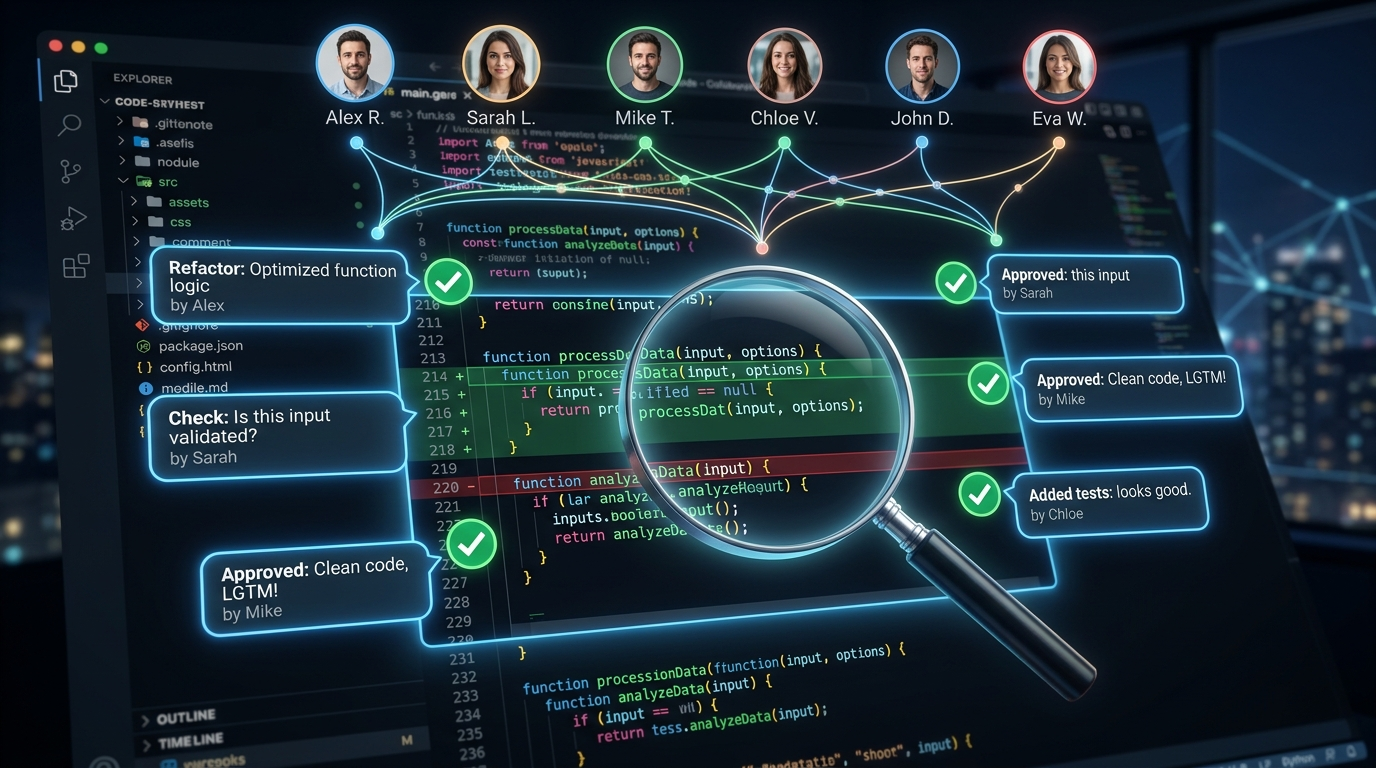

Writing Review Comments That Help

The way you write review comments determines whether the author learns from the review or just grudgingly addresses the feedback. Specific patterns that work:

Ask questions instead of making demands. “What happens if user.email is null here?” is more useful than “Handle the null case.” The question prompts the author to think about the edge case, which helps them internalize the pattern for future code. The demand treats the author as an implementer of the reviewer’s specification rather than a co-designer of the solution. Questions also leave room for the author to say “that case cannot happen because of X” which might teach the reviewer something about the codebase.

Explain the why, not just the what. “This should use === instead of ==” is less useful than “This should use === because == with a string and number here would coerce '0' to 0, which would treat a zero-balance account as falsy and skip the notification.” The second version teaches; the first just corrects. Review comments that explain the reasoning help the author avoid the same class of bug in future code, not just this specific instance.

Distinguish between blocking and non-blocking comments. We use a prefix convention:

// Blocking: must be addressed before merge

[BLOCK] This query is vulnerable to SQL injection because the

user-supplied sortBy parameter is interpolated directly into

the ORDER BY clause. Use a whitelist of allowed column names.

// Suggestion: should probably be addressed but use your judgment

[SUGGEST] Consider extracting this 40-line function into two

functions. The data fetching and the transformation are separate

concerns and would be easier to test independently.

// Nit: take it or leave it, purely stylistic

[NIT] The variable name 'd' could be more descriptive. Maybe

discountPercentage? Totally optional.This convention eliminates the ambiguity of “does the reviewer expect me to change this or are they just thinking out loud?” Every team I have worked with that adopted this convention reported fewer review round-trips and faster merge times. The author knows exactly which comments require action and which are optional, so they can address all blocking comments in a single revision instead of going back and forth asking for clarification.

The Size Problem: Why Big PRs Get Bad Reviews

Research from Microsoft and Google consistently shows that review quality degrades sharply above 400 lines of diff. Reviewers spend more total time on large PRs but less time per line, and the defect detection rate drops from approximately 85% on PRs under 200 lines to under 45% on PRs over 1,000 lines. The reviewers’ attention fatigue is real and measurable. After about 200-300 lines of diff, the reviewer’s brain starts pattern-matching rather than thinking critically, and subtle bugs slip through.

Our PR size guidelines:

- Under 200 lines: Expected to be reviewed thoroughly within 4 hours of submission.

- 200-400 lines: Acceptable. May take up to 8 hours for review. Author should provide a guided tour in the PR description (“start reading at file X, which is the entry point, then follow the call chain to Y and Z”).

- 400-800 lines: Requires justification. The PR description must explain why it could not be split. Reviewer may request a walkthrough call before reviewing.

- Over 800 lines: Rejected. Must be split into smaller PRs. The only exceptions are auto-generated code (migrations, lockfiles) and initial scaffolding of a new service.

Splitting large changes into reviewable PRs is a skill that takes practice. The two most useful techniques are:

Stacked PRs: Break a feature into a sequence of PRs where each one builds on the previous. PR 1 adds the database schema and migration. PR 2 adds the data access layer. PR 3 adds the API endpoints. PR 4 adds the frontend. Each PR is independently reviewable and (ideally) independently deployable behind a feature flag. The key is that each PR should be shippable on its own: it should not break anything, even if the rest of the stack is not yet merged.

Preparatory refactoring: Before implementing a feature, submit a separate PR that refactors the existing code to make the feature implementation simpler. The refactoring PR should not change behavior (only move code around, extract functions, rename things). The feature PR then adds new behavior on top of the cleaner foundation. This produces two easy-to-review PRs instead of one hard-to-review PR that mixes refactoring with new behavior. The refactoring PR is easy to verify (run all existing tests, confirm they pass) and the feature PR is easy to understand (all changes are new behavior, no movement or renaming noise).

Review Turnaround Time Matters

A review that arrives 3 days after the PR was submitted is nearly worthless. The author has moved on to other work, their mental context on the change has evaporated, and addressing review feedback now requires expensive context switching. Studies show that context switching costs 15-25 minutes per switch for engineering work, so a review response that requires the author to context-switch back to a 3-day-old PR effectively costs 30-50 minutes before they even start addressing the feedback.

Our target is: first review within 4 business hours of submission. Not approval, just first review. The reviewer reads the PR, leaves their comments, and either approves or requests changes. The author addresses feedback and re-requests review. The second review round should happen within 2 hours. Most PRs merge within 1 business day of submission.

To make this work, every engineer allocates the first 30 minutes of their morning and 30 minutes after lunch to code review. This is not a suggestion; it is a scheduled calendar block. Review is part of the job, not an interruption to the job. Teams that treat review as a favor one engineer does for another end up with chronic review bottlenecks and a culture where PRs sit for days. When review is a scheduled activity with dedicated time, it happens consistently and promptly.

What We Automate

Everything that can be automated should be, so that human reviewers focus on what cannot be automated:

- Formatting: Prettier runs on commit via a pre-commit hook. No review comments about formatting. Ever.

- Linting: ESLint with strict rules runs in CI. No review comments about unused imports, missing semicolons, or

varvslet. - Type checking: TypeScript strict mode catches type errors before review. If the reviewer is pointing out type issues, the type system is not strict enough.

- Test coverage: Codecov comments on the PR with coverage changes. If new code has low coverage, the bot flags it so the reviewer can focus on whether the right things are tested, not whether tests exist at all.

- Security scanning: Snyk and VibeGuard run on every PR. Known vulnerability patterns are flagged automatically.

- PR size check: A GitHub Action comments a warning on PRs over 400 lines of non-generated diff. This is a nudge, not a block, but it makes authors think twice about large PRs.

After automating these six categories, approximately 15% of what previously appeared as review comments disappeared entirely. The remaining review comments were higher quality because reviewers were not spending cognitive energy on style issues that tools could handle.

We also automate reviewer assignment. Our CODEOWNERS file maps directories and file patterns to the engineers who are most qualified to review changes in those areas. This ensures that changes to the authentication service are reviewed by someone who understands authentication, not whoever happens to be available. The CODEOWNERS file is reviewed quarterly to ensure it reflects current team knowledge:

# CODEOWNERS

/src/auth/** @security-champion-1 @auth-lead

/src/payments/** @security-champion-2 @payments-lead

/src/api/middleware/** @security-champion-1

/infrastructure/** @devops-lead

*.sql @db-leadThe combination of automated style enforcement and automated reviewer assignment means that by the time a human reviewer opens a PR, the formatting is correct, the linter is satisfied, the types check, the security scanner has run, and the right person has been assigned to review. The reviewer can focus entirely on correctness and design, which is where human judgment is irreplaceable.

Measuring Review Effectiveness

We track four metrics to evaluate whether our review process is working:

- Escape rate: Percentage of production bugs that were introduced in code that passed review. Target: under 10%. Current: 7.2%. This is the ultimate metric: if bugs escape review, the review process is not working regardless of how many comments are written.

- Review turnaround: Time from PR submission to first review. Target: under 4 hours. Current: 2.8 hours median.

- Review comment ratio: Percentage of comments that are correctness/design vs. style. Target: at least 60% correctness/design. Current: 68%.

- Review rework rate: Percentage of PRs that require more than 2 review rounds. Target: under 20%. Current: 14%. A high rework rate indicates that either PRs are submitted before they are ready or review feedback is unclear.

One final observation from six years of code review: the quality of reviews improves dramatically when reviewers take breaks between reviewing different PRs. Reviewing three PRs back-to-back leads to attention fatigue by the third PR. Our most effective reviewers space their review sessions: they review one or two PRs in the morning block and one or two in the afternoon block, with productive coding work in between. The coding work keeps them mentally engaged and prevents the glazed-eye scanning that makes large review sessions unproductive. Review is cognitively demanding work that requires focused attention; treating it as a mindless task between meetings produces mindless results.

The teams I have seen with the strongest review cultures share a common trait: they view code review as a collaborative design conversation rather than a quality gate. The PR is not a finished product being inspected; it is a draft being refined through dialogue between the author and the reviewer. When both parties approach the review with curiosity rather than judgment, the process produces better code, better tests, and engineers who learn from each other continuously. That learning compounds over years, and it is the real return on investment from code review, far exceeding the value of any individual bug caught.

None of these metrics are perfect. Escape rate can be gamed by not attributing bugs to specific PRs. Comment ratio depends on honest categorization. But they are useful directional indicators that help us identify when the review process is degrading and needs attention. Code review is a skill, and like any skill, it improves with deliberate practice, feedback, and measurement.